Nvidia's Strategic Launch at GTC 2026

Nvidia Corporation, a leader in artificial intelligence and graphics processing technology, has officially unveiled its latest innovation during the annual GTC 2026 developer conference held in San Jose, California. The company introduced the Groq 3 language processing unit (LPU), a specialized chip designed to enhance inference capabilities for multiagent workloads. This launch reflects Nvidia's ongoing commitment to expanding its portfolio of advanced computing solutions tailored for data center operators and AI developers.

The Significance of the Groq 3 LPU

The Groq 3 LPU is engineered specifically for inference tasks, which are critical in AI applications that require real-time decision-making and responsiveness. Unlike traditional graphics processing units (GPUs) that are optimized for a wide range of tasks, the Groq 3 focuses on processing large volumes of data through its advanced architecture. This specialization allows for improved efficiency and performance in handling complex multiagent interactions, making it a valuable asset for sectors such as autonomous vehicles, smart cities, and robotics.

Market Dynamics and Competitive Landscape

The introduction of the Groq 3 comes at a time when the demand for AI-driven solutions is surging across multiple industries. As companies increasingly rely on data to drive decision-making processes, the need for powerful and efficient processing units has never been more critical. Nvidia's competitors, including Intel and AMD, are also ramping up their investments in AI technologies, intensifying the race for market dominance in this lucrative sector.

Nvidia's strategic focus on inference chips positions it favorably within the market. The Groq 3 is expected to not only bolster Nvidia's existing product lineup but also attract new clients seeking dedicated solutions for their AI workloads. As enterprises continue to explore the potential of AI, the demand for specialized hardware that can handle complex tasks efficiently will likely grow.

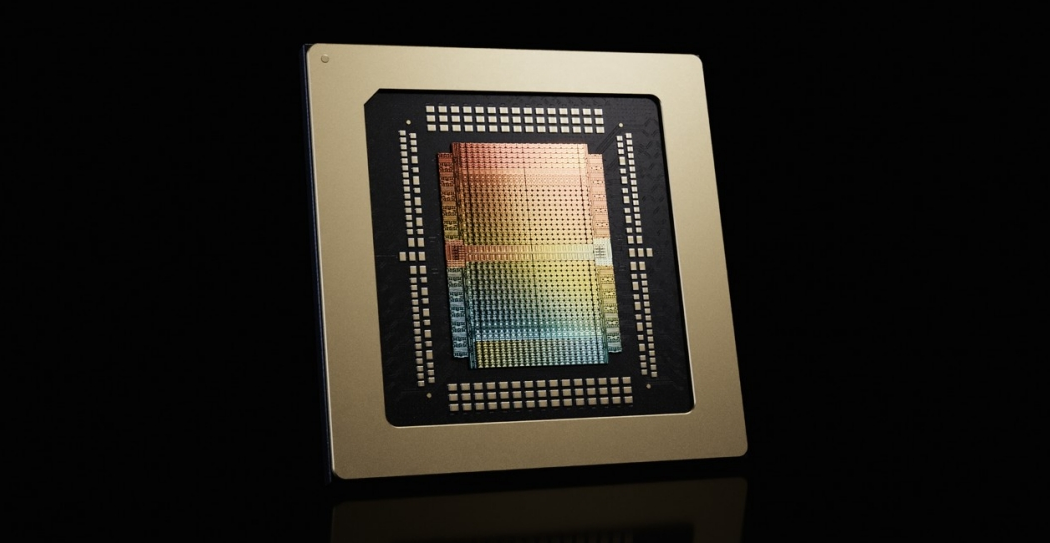

Technical Specifications and Innovations

The Groq 3 LPU boasts several technical advancements that set it apart from its predecessors. It features a highly parallel architecture that allows for simultaneous processing of multiple data streams, significantly reducing latency and enhancing throughput. Additionally, the chip integrates advanced machine learning algorithms directly into its architecture, enabling faster model training and inference times.

One of the standout features of the Groq 3 is its ability to scale seamlessly within existing data center infrastructures. This scalability ensures that organizations can easily integrate the chip into their current systems without the need for extensive overhauls. Furthermore, the chip's energy-efficient design aligns with the growing emphasis on sustainability in technology development, allowing data centers to reduce their carbon footprint while enhancing performance.

Implications for AI Development and Deployment

The launch of the Groq 3 LPU is poised to have significant implications for AI development and deployment. As organizations adopt more sophisticated AI models, the need for efficient inference processing becomes paramount. The Groq 3 not only meets this demand but also empowers developers to create more complex applications that can leverage real-time data inputs.

Moreover, the introduction of such specialized